Work

Select projects from 15+ years building enterprise platforms, AI-powered experiences, and systems at scale.

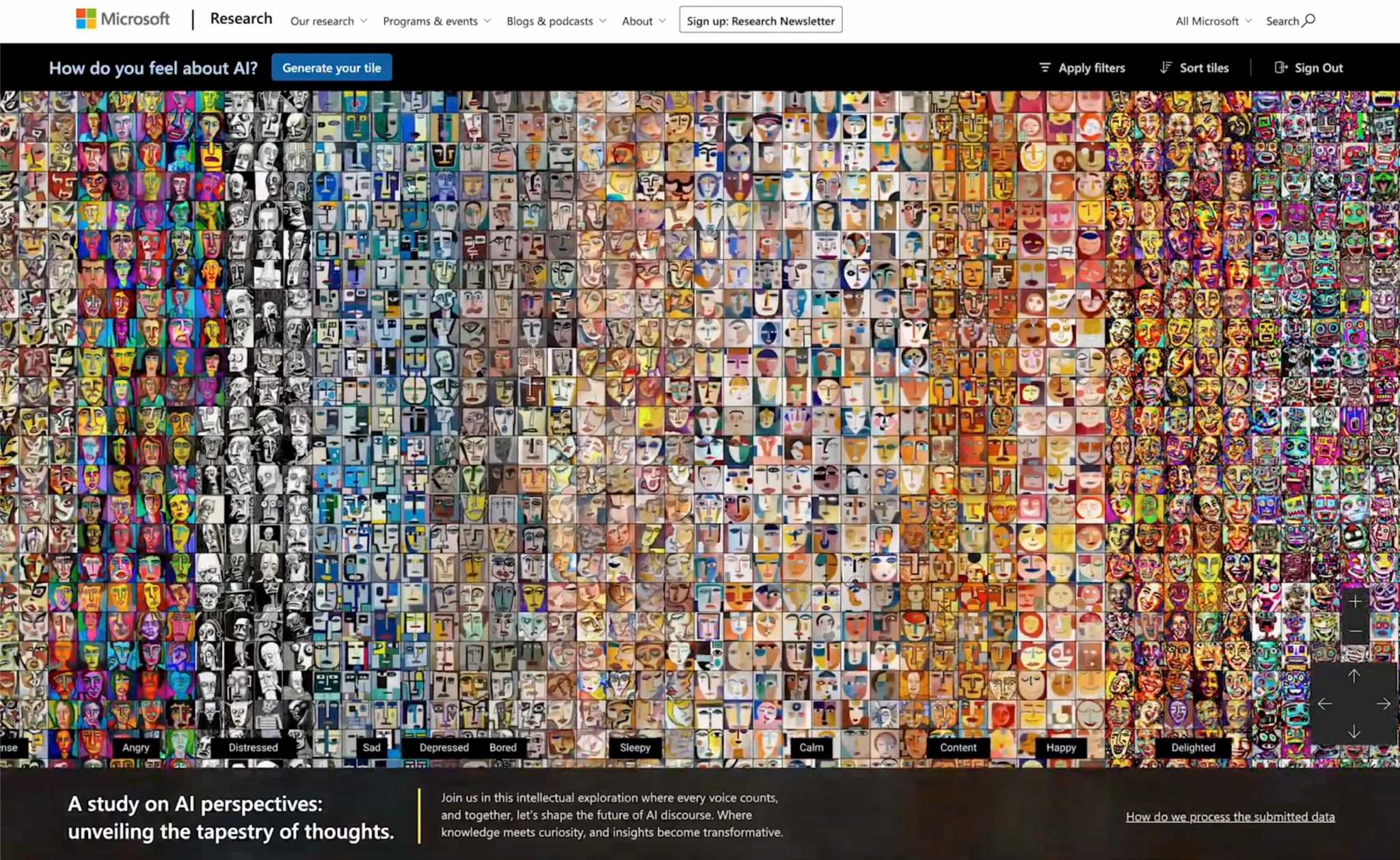

Microsoft Mosaic

We made a community mural with AI — an interactive experience that visualized public sentiment about artificial intelligence as generative art.

In mid-2024, Microsoft Research launched Mosaic: an interactive, AI-powered experience that visualized public sentiment about artificial intelligence. The experiment asked a deceptively simple question: "How do you feel about AI?" Using OpenAI's models, it translated each participant's answer into a stylized portrait, creating a living mural of collective emotion.

Mosaic wasn't a traditional software product — it was art, research, and experiment in equal parts. The vision originated with Asta Roseway, a principal researcher known for blending science and creativity inside Microsoft. She imagined an expressive, data-driven experience that could help people reflect on how AI made them feel. Our team at Fueled was brought in to bring that vision to life.

From Vision to Interaction

The challenge wasn't just to build a site, but to create something that felt like art. We framed Mosaic not as a dashboard or gallery, but as a unified, immersive canvas — a place where each visitor's feelings would be visualized and positioned alongside thousands of others.

The design avoided traditional web conventions. No scrolling, no text-heavy layouts. Just one large, panning interface built around AI-generated portraits that invited exploration. Every interaction was intentional, from the camera movement to the micro-animations.

We selected Microsoft's Fluent UI system as the base design framework, then extended its dark mode with custom layered glass effects and motion-driven transitions that deepened the sense of presence. It moved beyond UI and became an environment.

AI-Powered Sentiment to Generative Art

On the backend, we deployed two models hosted in Azure OpenAI Services. First, ChatGPT analyzed each participant's response and interpreted the sentiment — emotions like hopeful, anxious, or curious — along with intensity. That structured sentiment was then paired with style instructions and passed to DALL·E to generate a visual portrait matching the emotional tone.

Making that process feel seamless required more than API calls. We designed a system that translated a wide range of inputs into meaningful emotional categories, then guided the models to generate visuals that felt aligned. Responses like "a little nervous" and "totally terrified" needed to result in portraits that looked appropriately different. We built fallback logic for ambiguous inputs, introduced controlled randomness to avoid visual repetition among similar sentiments, and added safeguards against prompt manipulation.

Engineered for Performance and Emotion

Mosaic had to feel immersive — fast, fluid, and deeply responsive, even while rendering thousands of AI-generated images in real time.

We built the experience as a custom React and Next.js application. After prototyping several rendering approaches, we selected Three.js for the WebGL performance needed to deliver cinematic frame rates. Paired with React Three Fiber and the Drei helper library, it gave us the control to bring Mosaic to life.

Performance was a design requirement from the outset. A low frame rate would break immersion. We engineered custom debugging tools to visualize canvas behavior in real time, optimizing draw calls, bounding box detection, and frustum culling to ensure only visible tiles rendered at any moment. Custom lazy loading based on camera position kept portrait loading seamless, backed by Azure Blob Storage for scalable delivery.

Impact

Mosaic generated over 2,000 AI portraits and was showcased internally across Microsoft, earning attention not just for its technical execution but for its human-centric perspective. In a company defined by engineering, Mosaic introduced a different lens — showing how AI could explore emotion, not just optimize productivity.

Built at Fueled in partnership with Microsoft Research.